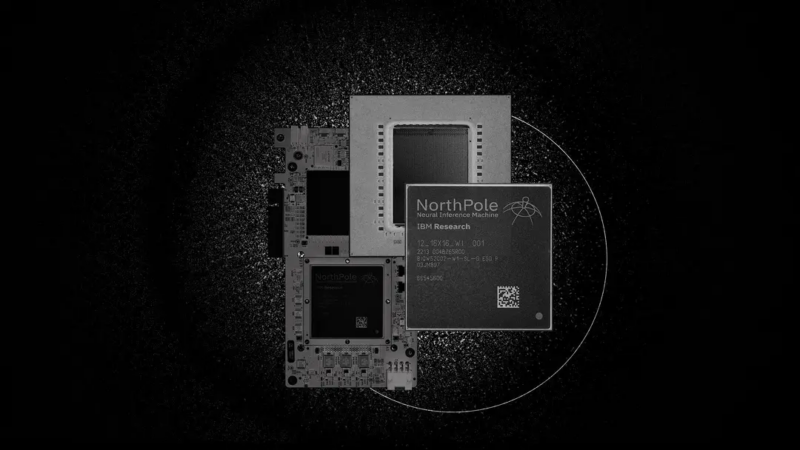

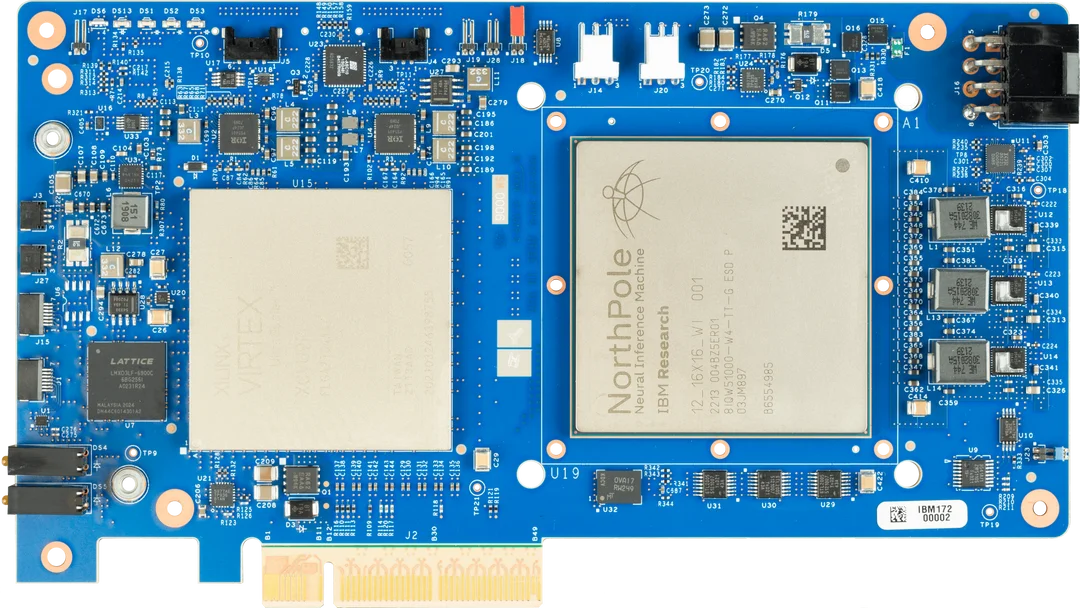

22 billion transistors, IBM machine learning processor NorthPole, energy efficiency increased by 25 times

IBM is at it again.

As AI systems develop rapidly, their energy requirements are also increasing. Training new systems requires large data sets and processor time, making them extremely energy-intensive. In some cases, smartphones can easily do the job by executing some well-trained systems. However, if it is executed too many times, energy consumption will also increase.

Fortunately, there are many ways to reduce the latter’s energy consumption. IBM and Intel have experimented with processors designed to mimic the behavior of actual neurons. IBM also tested performing neural network calculations in phase-change memory to avoid repeated accesses to RAM.

Now, IBM has another approach. The company’s new NorthPole processor synthesizes some of the ideas from the above approaches and combines them with a very streamlined way to run computations, creating an energy-efficient chip that can efficiently execute inference-based neural networks. The chip is 35 times more efficient than a GPU in areas such as image classification or audio transcription.

Official blog: https://research.ibm.com/blog/northpole-ibm-ai-chip

The NorthPole difference

NorthPole is different from traditional AI processors

First, NorthPole does nothing to address the need to train neural networks, it is designed purely for execution.

Second, it is not a general-purpose AI processor but is specifically designed for inference-focused neural networks. So, if you want to use it to reason, find out the content of an image or audio clip, etc., then it’s right. But if you need to run a large language model, this chip doesn’t seem to be of much use.

Finally, while NorthPole borrows some ideas from neuromorphic computing chips, it is not neuromorphic hardware because its processing units perform computations rather than emulating the spiking communications used by actual neurons.

NorthPole, like TrueNorth before it, consists of a large array of compute cells (16×16), each containing local memory and code execution capabilities. Therefore, all the weights of the various connections in the neural network can be stored exactly where they are needed.

It also features extensive on-chip networking, with at least four different networks. Some of these networks carry information about completed computations to the next computing unit that needs them. Other networks are used to reconfigure the entire array of computing units, providing the neural weights and code needed to execute one layer of the neural network while the previous layer is still being computed. Finally, communication between adjacent computing units is optimized. This is useful for things like finding edges of objects in images. If adjacent pixels are assigned to adjacent computing units when an image is input, they can more easily cooperate to identify features that span adjacent pixels.

Beyond that, NorthPole’s computing resources are unusual. Each unit is optimized to perform lower precision calculations, ranging from 2 bit to 8 bit. To ensure the use of these execution units, they cannot perform conditional branches based on variable values. That is, user code cannot contain if statements. This simple execution enables massively parallel execution per computing unit. At 2-bit precision, each unit can perform more than 8,000 calculations in parallel.

Supporting software

Because of these unique designs, the NorthPole team needed to develop its own training software to calculate the minimum level of accuracy required for each layer to operate successfully. Executing neural networks on a chip is also a relatively unusual process.

Once the neural network’s weights and connections are placed in on-chip buffers, execution simply requires an external controller to upload the data it wants to run and tell it to start running. Everything else runs without the CPU, which limits system-level power consumption.

The NorthPole test chip is manufactured on a 12nm process, which is far behind the leading edge of technology. Still, they managed to fit 256 computing units on 22 billion transistors, each with 768 KB of memory. When the system is compared to Nvidia’s V100 Tensor Core GPU, which is built on a similar process, NorthPole has 25 times the computing power at the same power consumption.

Under the same conditions, NorthPole outperforms state-of-the-art GPUs by approximately five times. Tests of the system have shown that it can also efficiently perform a range of widely used neural network tasks.

Original link:https://mp.weixin.qq.com/s/p6pl-WUxfCuJTUJGagbNGg