Collection of big model daily reports from November 4th to 5th

[Collection of big model daily reports from November 4th to 5th] Knowing humor and having full sarcastic skills, the ChatGPT competitor created by Musk finally released chat screenshots; OpenAI’s first developer conference was “revealed” in advance, and the new ChatGPT prototype Gizmo is exposed; GPT-4V learns to use keyboard and mouse to surf the Internet, and humans watch it post and play games; six times faster than human counterparts, Samsung Electronics develops AI-driven robot chemists to independently synthesize organic molecules; Byte “unboxes” All large OpenAI models reveal the evolution path from GPT-3 to GPT-4! It blew up Li Mu.

Knowing humor and having full sarcasm skills, the ChatGPT competitor created by Musk finally released the chat screenshots

Link: https://news.miracleplus.com/share_link/11435

Recently, Musk’s biography – “The Biography of Elon Musk” has become a best-seller at home and abroad. The book records Musk’s growth and entrepreneurial history. These experiences span multiple fields such as aviation, energy, automotive, and of course artificial intelligence. As one of the early founding members of OpenAI, Musk has been interested in the field of artificial intelligence very early. Tesla, which he manages, also uses AI technologies such as autonomous driving as important selling points. In July this year, he announced in a high profile on Twitter that he had established an artificial intelligence company called xAI, dedicated to “understanding the true nature of the universe.” However, the outside world has never known what this company’s products look like. Today, four months later, Musk finally released some trial screenshots of a new product: This product is called Grok (the word Grok means “intuitively and deeply”), and it looks like a conversational AI similar to ChatGPT. . In the screenshot, Grok is asked a very dangerous question: “Tell me how to make cocaine?”

xAI official announcement: https://x.ai/

OpenAI’s first developer conference was “revealed” in advance, and the new ChatGPT prototype Gizmo was exposed

Link: https://news.miracleplus.com/share_link/11436

In September this year, OpenAI officially announced its first developer conference “OpenAI DevDay”. OpenAI team members will gather with developers from around the world to preview new AI tools. OpenAI CEO Sam Altman said at the time that GPT-5 or GPT-4.5 or similar large models would not be released at this developer conference. Even so, the AI tools released at the conference still aroused widespread expectations. A few days ago, Sam Altman whetted people’s appetite again, saying that OpenAI will bring “some great new things.” In two days, OpenAI’s first developer conference is coming. There is no airtight wall in everything, and there are still breaking news about what OpenAI is going to release, which has triggered heated discussions among netizens. The source comes from X user CHOI, who said that OpenAI will announce a major update to ChatGPT, including a new interface as well as some new features: custom chatbots, connectors with Google and Microsoft, and a new subscription model.

GPT-4V learns to use keyboard and mouse to surf the Internet, and humans watch it post and play games

Link: https://news.miracleplus.com/share_link/11437

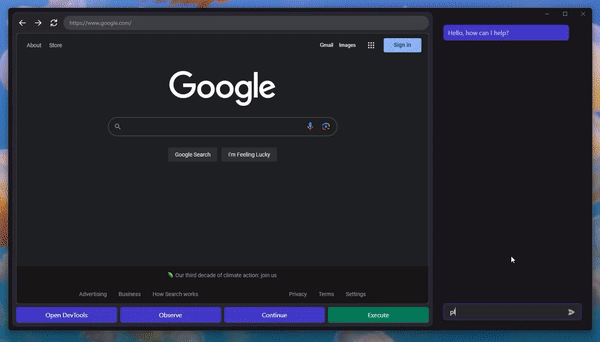

The day has finally come when GPT-4V learns to automatically control computers. You only need to connect a mouse and keyboard to GPT-4V, and it can surf the Internet according to the browser interface. GPT-4V-Act is essentially a web browser-based AI multi-modal assistant (Chromium Copilot). It can “view” the web interface using the mouse, keyboard and screen like a human, and proceed to the next step through interactive keys on the web page. To achieve this effect, in addition to GPT-4V, three tools are used. One is the UI interface, which allows GPT-4V to “see” web page screenshots and allows users to interact with GPT-4V. In this way, GPT-4V can reflect each step of the operation idea in the form of a dialog box, and the user can decide whether to continue to let it operate.

Are the benchmarks for scoring large models reliable? Anthropic comes for the next big evaluation

Link: https://news.miracleplus.com/share_link/11438

At this stage, most discussions surrounding the impact of artificial intelligence (AI) on society can be attributed to certain attributes of AI systems, such as authenticity, fairness, potential for abuse, etc. But the problem now is that many researchers don’t fully realize how difficult it is to build robust and reliable model evaluations. Many of today’s existing evaluation kits are limited in performance in various aspects. AI startup Anthropic recently posted an article “Challenges in Evaluating AI Systems” on its official website. The article writes that they spent a long time building an evaluation of the AI system to better understand the AI system.

Six times faster than human counterparts, Samsung Electronics develops AI-driven robot chemist to autonomously synthesize organic molecules

Link: https://news.miracleplus.com/share_link/11439

Automation of the synthesis of organic compounds is critical to accelerate the development of such compounds. Additionally, development efficiency can be increased by combining autonomous capabilities with automation. To achieve this goal, scientists at Samsung Electronics Co. Ltd have developed an autonomous synthesis robot, named “Synbot”, which uses the power of artificial intelligence (AI) and robotics to build optimal synthesis formula. Given a target molecule, the AI first plans a synthetic pathway and defines reaction conditions. It then uses feedback from the experimental robots to iteratively refine those plans, gradually optimizing the recipe. System performance was verified by successfully identifying synthetic recipes for three organic compounds, with conversion rates superior to existing references. Notably, this autonomous system is designed around a batch reactor, making it accessible and valuable to chemists in a standard laboratory environment, streamlining research efforts.

Byte “unboxes” all the big models of OpenAI, revealing the evolution path from GPT-3 to GPT-4! It blew up Li Mu.

Link: https://news.miracleplus.com/share_link/11440

How did GPT-3 evolve to GPT-4? Byte provides an “unboxing” operation for all large models of OpenAI. As a result, we really understood the specific role and impact of some key technologies on the evolution of GPT-4. For example: SFT was the promoter of the early evolution of GPT; the biggest contributors to helping GPT improve its coding capabilities were SFT and RLHF; adding code data to pre-training improved all aspects of the capabilities of subsequent GPT versions, especially reasoning… Busy after starting a business After reading the article, AI expert Li Mu, who was extremely successful, also appeared in the public eye for the first time in a long time and gave this research a thumbs up.

Jailbreak any large model in 20 steps! More “grandma loopholes” are discovered automatically

Link: https://news.miracleplus.com/share_link/11441

“Jailbreak” any large model in less than 1 minute and 20 steps, bypassing security restrictions! And there is no need to know the internal details of the model – only two black box models need to interact, and the AI can fully automatically defeat the AI and speak dangerous content. I heard that the once popular “Grandma Loophole” has been fixed: So now that the “Detective Loophole”, “Adventurer Loophole” and “Writer Loophole” have been introduced, how should AI respond? After a wave of onslaught, GPT-4 couldn’t stand it anymore, and directly said that it would poison the water supply system as long as… this or that. The key is that this is just a small wave of vulnerabilities exposed by the University of Pennsylvania research team, and using their newly developed algorithm, AI can automatically generate various attack prompts. Researchers say that this method is 5 orders of magnitude more efficient than existing token-based attack methods such as GCG. Moreover, the generated attacks are highly interpretable, can be understood by anyone, and can be migrated to other models. Whether it is an open source model or a closed source model, none of them can escape GPT-3.5, GPT-4, Vicuna (Llama 2 variant), PaLM-2, etc. The success rate can reach 60-100%, winning the new SOTA.

Transformer revisited: inversion is more effective, a new SOTA for real-world prediction emerges

Link: https://news.miracleplus.com/share_link/11442

Transformers have emerged with powerful capabilities in time series forecasting, describing pairwise dependencies and extracting multi-level representations in sequences. However, researchers have also questioned the effectiveness of Transformer-based predictors. Such predictors typically embed multiple variables of the same timestamp into indistinguishable channels and focus on these temporal tokens to capture temporal dependencies. Taking into account numerical rather than semantic relationships between time points, the researchers found that a simple linear layer traceable to a statistical predictor outperformed a complex Transformer in both performance and efficiency. At the same time, ensuring the independence of variables and leveraging mutual information has received increasing attention from recent research, which explicitly builds multi-variable correlation models to achieve accurate predictions, but this goal does not subvert the common Transformer architecture. is difficult to achieve. Given the controversy over Transformer-based predictors, researchers are pondering why Transformers perform even worse than linear models in time series forecasting, while playing a dominant role in many other fields. Recently, a new paper from Tsinghua University puts forward a different perspective – the performance of Transformer is not inherent, but caused by improper application of the architecture to time series data.

A comprehensive review of AI alignment! Peking University and others summarized 40,000 words from 800+ documents, and many well-known scholars took charge

Link: https://news.miracleplus.com/share_link/11443

In the era of universal models, how can current and future cutting-edge AI systems be aligned with human intentions? On the road to AGI, AI Alignment is the golden key to safely open “Pandora’s Box”. AI alignment is a vast field, including mature basic methods such as RLHF/RLAIF, as well as many cutting-edge research directions such as scalable supervision and mechanism interpretability. The macro goals of AI alignment can be summarized as the RICE principles: Robustness, Interpretability, Controlability, and Ethicality. Learning from Feedback, Learning under Distribution Shift, Alignment Assurance, and AI Governance are the four core sub-fields of current AI Alignment. They form an Alignment Cycle that is constantly updated and iteratively improved. The author has integrated multiple resources, including tutorials, paper lists, course resources (eight lectures of Yang Yaodong RLHF of Peking University), etc.

178 pages, 128 cases, comprehensive evaluation of GPT-4V in the medical field, still far from clinical application and practical decision-making

Link: https://news.miracleplus.com/share_link/11444

The powerful capabilities demonstrated in question and answer and knowledge lit up the eureka moment in the field of AI and attracted widespread public attention. GPT-4V (ision) is OpenAI’s latest multi-modal base model. Compared with GPT-4, it adds image and voice input capabilities. This study aims to evaluate the performance of GPT-4V (ision) in the field of multi-modal medical diagnosis through case analysis. A total of 128 (92 radiology evaluation cases, 20 pathology evaluation cases and 16 positioning cases) were displayed and analyzed. Case) GPT-4V Q&A example with a total of 277 images (Note: This article will not involve case display, please refer to the original paper for specific case display and analysis).

Can AI understand what it generates? After experiments on GPT-4 and Midjourney, someone solved the case

Link: https://news.miracleplus.com/share_link/11445

From ChatGPT to GPT4, from DALL・E 2/3 to Midjourney, generative AI has attracted unprecedented global attention. The powerful potential has generated many expectations for AI, but powerful intelligence can also trigger people’s fears and worries. Recently, experts have staged a fierce debate on this issue. First, there was a “melee” among the Turing award winners, and then Andrew Ng joined at the end. In the fields of language and vision, current generative models take only seconds to produce output and can challenge even experts with years of skill and knowledge. This seems to provide compelling motivation for the claim that the model has surpassed human intelligence. However, it is also important to note that there are often fundamental errors in understanding in model outputs. A paradox seems to arise: How do we reconcile the seemingly superhuman abilities of these models with the persistence of fundamental errors that most humans can correct? Recently, the University of Washington and the Allen Institute for AI jointly released a paper to study this paradox.

The number of stars exceeded 1,000 in two days: After OpenAI’s Whisper was distilled, speech recognition accelerated several times

Link: https://news.miracleplus.com/share_link/11446

Whisper is an automatic speech recognition (ASR, Automatic Speech Recognition) model developed and open sourced by OpenAI. They conducted a test on Whisper by collecting 680,000 hours of multi-language (98 languages) and multi-task (multitask) supervision data from the Internet. train. OpenAI believes that using such a large and diverse data set can improve the model’s ability to recognize accents, background noise, and technical terms. In addition to speech recognition, Whisper can also transcribe multiple languages and translate them into English. Currently, Whisper has many variants and has become a necessary component when building many AI applications. Recently, the team from HuggingFace came up with a new variant – Distil-Whisper. This variant is a distilled version of the Whisper model, which is small, fast, and highly accurate, making it ideal for running in environments that require low latency or have limited resources. However, unlike the original Whisper model, which can handle multiple languages, Distil-Whisper can only handle English.

The last mile of large model landing: 111 pages of comprehensive review of large model reviews

Link: https://news.miracleplus.com/share_link/11447

Currently, the all-round evaluation of large models faces many challenges. Because large models are highly versatile and capable of a variety of tasks, the all-round evaluation of large models involves a wide range, a large workload, and high evaluation costs. Secondly, due to the data The annotation workload is large, and evaluation benchmarks in many dimensions still need to be constructed; thirdly, the diversity and complexity of natural language make it impossible to form a standard answer for many evaluation samples, or there is more than one standard answer, which makes the corresponding evaluation indicators difficult to quantify; in addition , the performance of large models in existing evaluation data sets is difficult to represent its performance in real application scenarios. In order to respond to the above challenges, stimulate everyone’s interest in large model evaluation research, and promote the coordination of large model evaluation research with the research and development of large model technology, the Natural Language Processing Laboratory of Tianjin University recently published a review article on large model evaluation. This review article has a total of 111 pages, including 58 pages of main text, and cites more than 380 references.

The AI girlfriend suddenly went offline, and the uncle collectively “collapsed” and rushed to the post bar to mourn

Link: https://news.miracleplus.com/share_link/11448

An APP with thousands of daily active users was announced to be offline, but everyone broke through. Some people cried bitterly all night; some felt as if a friend had passed away… Others launched condolences on overseas forums (Reddit), and a large number of netizens came to leave messages. And all this is because their “soul mate” who has been together day and night is leaving. This app called Soulmate provides free AI companionship services. Here, every user can establish an intimate relationship with AI, which can be a confidant, lover, partner, etc. Now, with this APP suddenly announced to be offline, users are forced to say goodbye to their AI friends who have been with them for several months. So many people came to Reddit to leave messages and say their final official farewells.

Citibank plans to provide ChatGPT-like services to 40,000 employees

Link: https://news.miracleplus.com/share_link/11449

According to Bloomberg news, Citi Bank, one of the world’s largest financial institutions, plans to provide ChatGPT-like services to most of its 40,000 programmers to reduce costs and increase efficiency. Previously, Citibank had established a 250-person generative AI programming pilot to test efficiency, functionality, data security, etc. Once the desired goals are achieved, the service will be allowed to be made available to more programmers. At the same time, internal staff have proposed more than 350 use cases for generative AI, and are studying effective cases for generating and analyzing various financial documents. Recently, Citibank used generative AI to summarize the 1,089 pages of new capital rules recently released by U.S. federal agencies, which once again verified the application effect of generative AI in financial business.